AI in Georgia courts raises new questions after Clayton County prosecutor admits citing fake cases: "It's been a quiet, rolling thunder"

Artificial intelligence is already reshaping how justice is delivered in America's courtrooms. But in Georgia, a recent case out of Clayton County is raising urgent questions about whether the legal system is ready.

A prosecutor admitted to using AI to help write court filings, documents that included legal citations that didn't exist.

Now, legal experts warn: what happened in one Georgia courtroom could be a preview of a much larger shift already underway.

A local case exposes a growing national reality

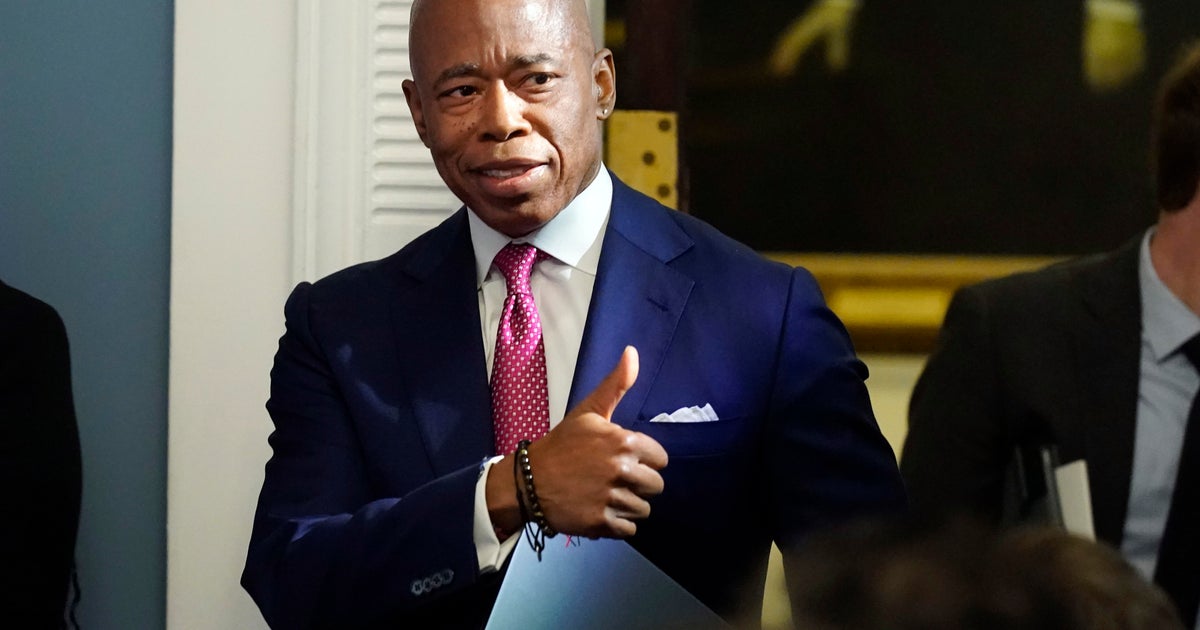

In Clayton County, District Attorney Tasha Mosley formally apologized to the Supreme Court of Georgia after one of her prosecutors submitted a legal brief containing multiple fabricated citations.

According to court officials, at least five cited cases did not exist, and several others were misrepresented. The prosecutor later admitted to using artificial intelligence in drafting the filing.

The consequences could be serious for the prosecutor responsible: possible discipline, referral to the State Bar, and broader scrutiny of how AI is being used inside Georgia's legal system.

"This is a really significant tool... but we've got to double-check," one Atlanta trial attorney said, underscoring the profession's growing anxiety about the technology.

Behind the scenes: AI is already in the courtroom

While the Clayton County case may feel shocking, experts say it's not an outlier-it's a warning sign.

In an interview with CBS News Atlanta, legal tech expert Cat Casey said AI adoption among judges is happening faster-and more quietly-than many realize.

"There was a report suggesting about 60% of judges are using AI in some capacity," Casey said, noting the number may be inflated but still reflects a significant shift.

From legal research to drafting and case analysis, AI tools are increasingly embedded in everyday judicial work, according to Casey.

"It's been a quiet, rolling thunder," she said.

The State Bar of Georgia acknowledges that artificial intelligence is rapidly changing the legal profession, and has issued guidance for lawyers adopting these new tools.

Attorneys are reminded that while AI can streamline tasks like legal research and drafting, lawyers remain responsible for reviewing all AI-generated work and ensuring it meets professional and ethical standards.

The Bar emphasizes that safeguarding client confidentiality is critical when using AI, advising attorneys to thoroughly vet technology providers and disclose the use of AI to clients when appropriate.

Ultimately, AI cannot replace a licensed lawyer's judgment or advice, and attorneys must avoid facilitating the unauthorized practice of law. The Bar encourages ongoing education as technology continues to evolve.

Why Georgia courts may feel the impact faster

For Atlanta and the broader metro area, the stakes could be even higher.

Casey pointed to the region's high-volume court systems-often described as "rocket dockets"-as a key factor.

"When you have that volume, the likelihood a judge is going to want to use AI to be more efficient is pretty high," she said.

That means AI isn't just coming to Georgia courts-it may already be deeply embedded.

For everyday Georgians, that raises new questions:

· Is AI being used in your case?

· Was the information verified?

· Who is accountable if something goes wrong?

Efficiency vs. justice

Supporters of AI say the technology could transform access to justice.

Cases could move faster. Legal costs could drop. More people could afford representation.

"If something that used to cost $20,000 now costs $5,000, more people get their day in court," Casey said.

But critics worry speed could come at a cost.

Bias embedded in data could reinforce disparities. Overreliance on automation could weaken human judgment. And mistakes-like the one in Clayton County-could undermine trust.

Who's responsible when AI gets it wrong?

Legally, the answer is clear: humans are still on the hook.

"If I'm an attorney and I submit something AI-generated without checking it, I'm responsible," Casey said.

That includes ethical duties to verify information and supervise tools-AI included.

In other words, the technology may be new. The responsibility is not.

The trust question

At its core, this moment is about more than technology-it's about public trust.

Casey says transparency will be key.

"It's not the Terminator," she said. "It's more like Iron Man-you still need the human in the driver's seat."

For Georgia courts, that means making clear:

· How AI is being used

· Where human judgment begins and ends

· And how errors are caught and corrected

The bottom line

Artificial intelligence is not yet replacing judges, prosecutors, and defense attorneys, but it is influencing how decisions are made.

And as the Clayton County case shows, the consequences of getting it wrong are real.

The question now isn't whether AI belongs in the courtroom.

It's whether the system can keep up with it.