Facebook unveils new plan to try to curb fake news

After months of public pressure, Facebook is announcing new steps it is taking to to curb the spread of fake news on the social network. The plan includes new tools to make it easier for Facebook users to flag fake stories, as well as a collaboration with the Poynter Institute, a highly respected journalism organization, to independently investigate claims.

Here’s how Facebook says the new process will work:

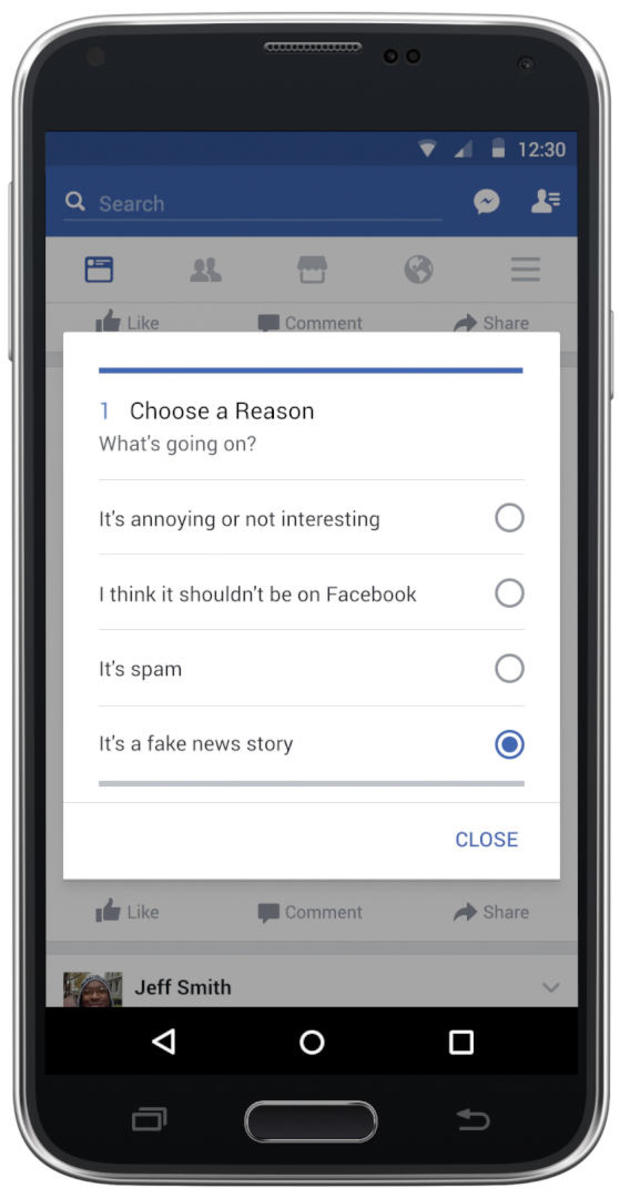

Facebook will rely primarily on individual users to flag obvious hoaxes on the platform. Though users can currently flag stories by clicking on the upper right corner of the post, the company said it’s experimenting with several methods to make the flagging process easier.

Once posts are flagged as potential fakes, third parties gets involved. Specifically, Facebook is forging a new partnership with Poynter, which since 2015 has mobilized fact-checkers from all over the world under an initiative called the International Fact Checking Code of Principles. Facebook said that based on reports from users as well as “other signals,” which the company did not elaborate on, it will refer suspicious stories to these fact-checking organizations for vetting.

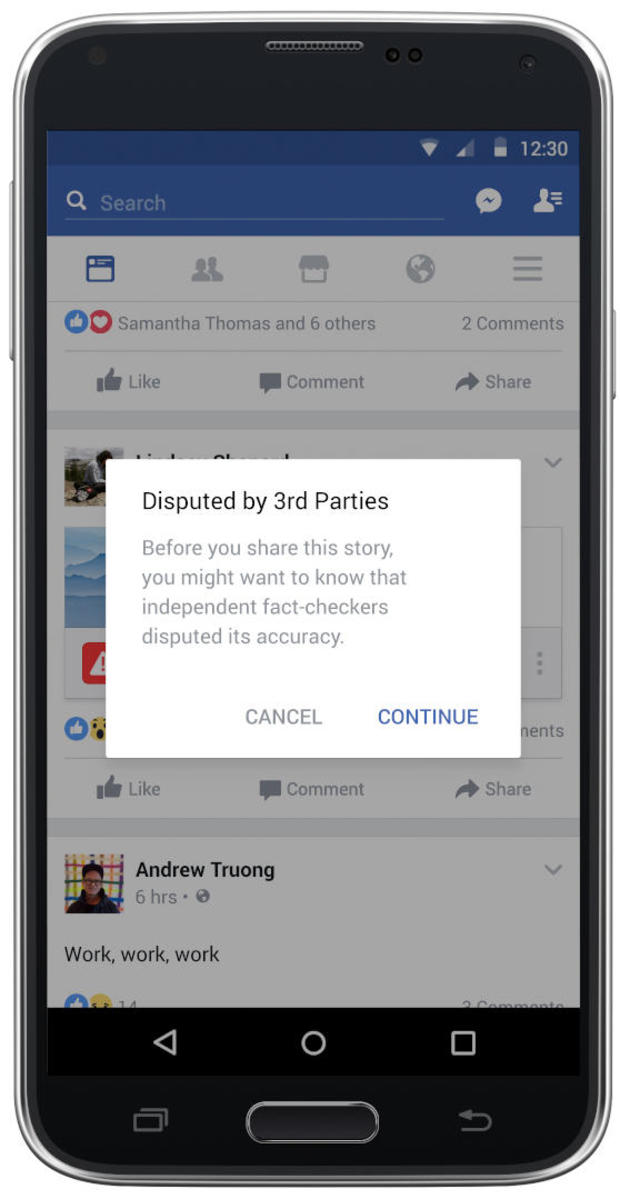

If Poynter’s fact-checkers determine the story is fake, it will be marked as “disputed” on Facebook. The story will still appear on Facebook, but with the “disputed” flag and a link to a corresponding article explaining the reasons it should not be trusted.

Disputed stories will rank lower on users’ News Feeds and companies will not be allowed to promote them as ad content. Users will still be allowed to share the disputed stories on Facebook, but the stories will carry those warnings with them.

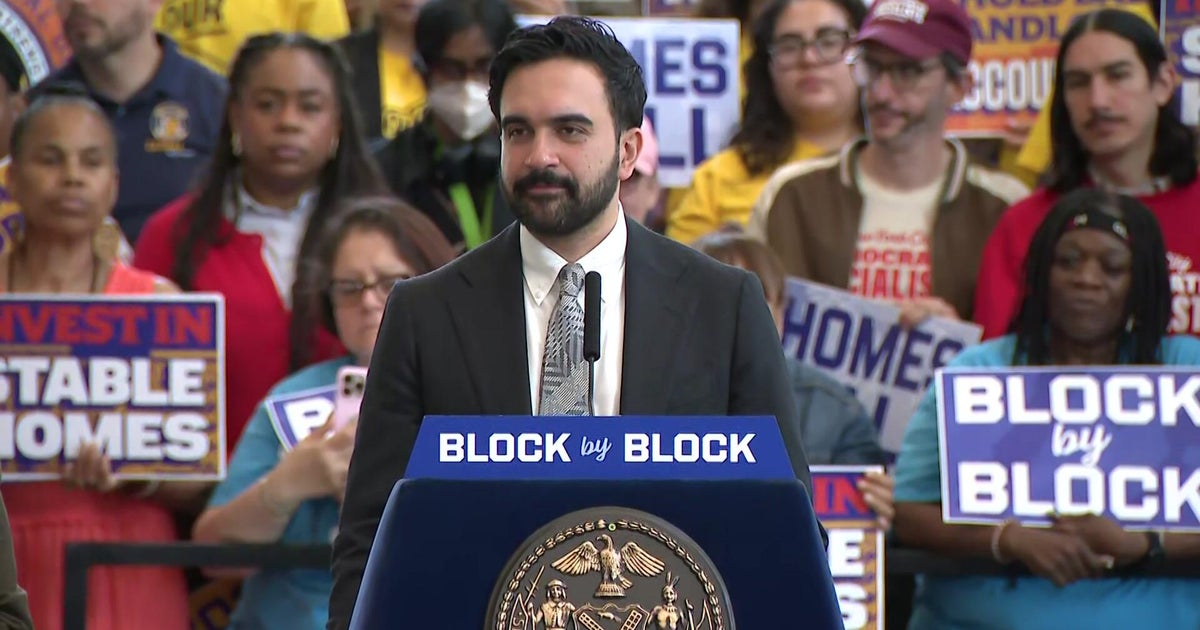

“We believe in giving people a voice and that we cannot become arbiters of truth ourselves, so we’re approaching this problem carefully. We’ve focused our efforts on the worst of the worst,” Facebook’s vice president of News Feed, Adam Mosseri, said in the statement.

Poynter has reported extensively on Facebook’s fake news problem in recent months. Alexios Mantzarlis, head of Poynter’s international fact checking network, wrote an extensive critique of the social network’s approach to fake news before the election — the tipping point at which the problem received widespread attention — called “Facebook’s fake news problem won’t fix itself.” In it, he wrote: “Facebook isn’t just another medium hoaxers can use to spread misinformation, or a new source of bias-confirming news for partisan readers. It turbocharges both these unsavory phenomena.”

Facebook, long believed to be applying sophisticated analysis to users’ every click, also shared that it’s experimenting with ways to penalize articles that users appear to distrust. If clicking on and reading an article appears to make users less likely to share it by significant margins, Facebook may interpret that as a sign.

“We’re going to test incorporating this signal into ranking, specifically for articles that are outliers, where people who read the article are significantly less likely to share it,” Mosseri said.

Facebook did not elaborate on how it could differentiate between articles individuals decided not to share for subjective reasons (i.e. the article was too long for users’ liking, included writing they perceived as bad, etc.) and articles individuals decided not to share because they found them disreputable.

Lastly, Facebook said it is targeting the money behind fake news, specifically the legions of spammers who disseminate content that masquerades as real news through URLs that are intentionally similar to the URLs of well-known news organizations — thus deceiving readers who are not critical of their sources. The spammers then make money off the ads on those dubious sites.

To target these profit centers, Facebook said it is eliminating the ability to “spoof” or impersonate domains on its site as well as taking a more critical look at certain content sources to “detect where policy enforcement actions might be necessary,” the company said.

CNET News executive editor Roger Cheng said the company really needed to take action to address the problem. “Facebook has been under fire for this fake news flap. They obviously needed to do something. A lot of these elements seem like they’re logical steps to kind of help with the fake news scourge,” Cheng told CBS News.

Facebook’s latest announcement provides some answers, but leaves many questions. For instance, what is the threshold required for the number of users to flag a certain piece of content before that content is sent up the food chain for verification? If swarms of Russia-paid trolls coordinate, for instance, to flag The New York Times’ recent reports on Russia’s hacking attempts on the U.S. election, will Poynter’s fact checkers have to spend time independently verifying these reports? How will Poynter prioritize the content that’s flagged, and what guidelines will it use for fact checking?

“The idea of making it easier to report fake stories is a good one in theory, but that could also be something that could be abused,” Cheng said.

Furthermore, there was little information about what the oversight process would be between Facebook and the Poytner fact-checkers. A Facebook spokesperson told Business Insider that Facebook is not paying the organizations.

The pressure on Facebook to announce concrete steps to combat fake news has been building for months, but reached a fever pitch after the election. In the weeks since, several outside developers have rolled out plug-ins to help users identify fake news on the social network, creating added pressure on Facebook to act aggressively and more quickly to target what is now a well-know issue.

In a poll released Thursday by the Pew Research Center, two-thirds of Americans say the phenomenon of fake news has created confusion about facts and current events.

Sixty-four percent of those surveyed said fake news causes “a great deal” of confusion, and nearly a quarter said they have shared fake news themselves — 14 percent said they shared something they knew was fake at the time, and 16 percent said they shared something and only later realized it wasn’t true.

In a Facebook post Thursday, CEO Mark Zuckerberg explained that there will be more work on this problem to come, and acknowledged that it requires a rethinking of the role the company plays in the modern media environment. “I think of Facebook as a technology company, but I recognize we have a greater responsibility than just building technology that information flows through. While we don’t write the news stories you read and share, we also recognize we’re more than just a distributor of news. We’re a new kind of platform for public discourse — and that means we have a new kind of responsibility to enable people to have the most meaningful conversations, and to build a space where people can be informed,” he wrote.