"Trust has been an issue": Facebook's ads take a sour turn

Facebook (FB) is an advertiser's dream: a digital walled garden where 2 billion people hand over information about where they work, what schools they attended and what they like to do on the weekends.

That's helped make the company a must-have for many marketers, who can slice and dice their ad campaigns to target specific groups, such as women over 45 years old who like to garden and who live in Idaho. Its reach and reams of data have also turned Facebook into a mighty financial engine, with its second-quarter ad sales jumping 47 percent to $9.2 billion from a year earlier.

Yet that success has come at a cost for Facebook. The company faces questions about how it sells ads, its safeguards for ensuring malicious or problematic ads are weeded out of the system, and whether lawmakers and regulators need to step in. Russia bought $100,000 in advertising on its platform during the 2016 presidential election cycle, generating 3,000 ads connected with 470 "inauthentic accounts," the social media giant said earlier this month.

Investigative news site ProPublica's recent revelation that Facebook could target ads to unsavory groups such as self-described "Jew haters" adds to the questions facing the social network. While most consumers understand the Internet has no shortage of hate speech, news that Facebook let advertisers target such a demographic came as a shock.

That Facebook within a matter of minutes sold to ProPublica $30 worth of ads targeting anti-Semites underscored what critics say is a critical weakness of digital ad systems: When everything is automated, who is acting as a gatekeeper to weed out the bad actors?

To be fair, It's not just Facebook. Google and Twitter have been taken to task for allowing advertisers to target racist and hateful keywords and demographics.

"These kinds of things have been going on for maybe longer than we are aware," said Kent Grayson, associate professor of marketing at Northwestern University, who pointed to fake reviews on the site of e-commerce giant Amazon as an example of how the system can be gamed to sway consumers. "The influence on our political process has heightened people's awareness that a name on a Facebook account or Twitter account might not be the person they say they are."

He added, "It could be just a bot that has identified you as someone who is open to a message."

Facebook on Thursday said it found a "small percentage" of people who filled in offensive responses to data about their employer or education in their profiles, and is removing the self-reported fields as targetable information for marketers until it updates its processes. The company declined to comment beyond the statement.

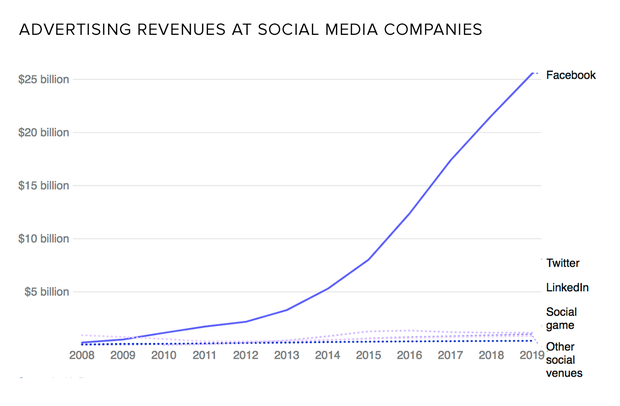

Despite these bumps, digital advertising continues to grow. Digital ad sales rose 22 percent to $72.5 billion last year, according to the Interactive Advertising Bureau. By some measures, online ads have now surpassed TV advertising. Marketers are migrating to digital ads because they're following consumers away from older media such as newspapers, magazines and TV. Digital ads can also provide more data to advertisers, which is invaluable in figuring out whether their ads are effective. On top of that, they're cheap compared with older forms of media, with advertisers spending fractions of a penny for a single ad.

"All those marketers really care about is, does it ring the cash register?" Facebook chief operating officer Sheryl Sandberg told The Drum earlier this month.

That's true, to an extent. Brand perception comes into play when advertisers find their spots running next to unsavory content, which eventually erode consumers' trust in a brand and can lead to lower sales.

"We are at a crossroads -- the internet provides a ton of information, and some of it is acceptable to advertisers and some is not," said David Hahn, chief product officer at Integral Ad Science, a firm that assesses fraud and brand safety concerns and works with Facebook, among other clients. "If a brand appears next to objectionable content, about 35 to 45 percent of customers rethink their relationship to the brand."

Digital advertising is capable of reaching huge audiences with one ad buy, a scope that is creating new issues for advertisers, such as ensuring their ads appearing on sites that align with their brands. In one case earlier this year, Kellogg's and Vanguard were among the companies that pulled ads from conservative news site Breitbart because it didn't mesh with their policies and images.

"The sheer volume isn't like anything we've ever seen with other channels," Hahn said. "If you buy TV slots, chances are you can pay someone to watch that. If you deliver 100 million impressions every day, you can't do that."

Some advertisers maintain "white lists" of sites they've pre-approved as appropriate for their brands, as well as "black lists" of sites and apps that are forbidden for the campaign. That doesn't always guarantee a company can sidestep unsavory content, with Integral Ad Science finding that one-third of ad impressions flagged as "risky" involved violence, such as an ad appearing next to coverage of a bombing.

Facebook's $493 billion market valuation is based on its huge advertising growth. But whether that continues may hinge on trust, including whether consumers continue to hand over their data to Facebook and whether advertisers feel there's enough oversight into where and how their ads appear.

"Facebook, like any company, has to deal with the trust it has with multiple stakeholders," said Northwestern's Grayson. "The bigger a company gets, the more they have to think about these various stakeholders more seriously."

At the same time, Americans are placing less trust in institutions, ranging from the media to the government, he added. That growing mistrust heightens the risks for Facebook if its users decide the company isn't acting in their best interests -- or selling ads to shady companies or to facilitate propaganda.

Without trust, he said, "the whole business model for Facebook falls apart."

Facebook and other digital platforms are working behind the scenes to weed out fake accounts, Hahn of IAS said.

"There are services that you can use to make sure you are de-risked, and that's what we are working toward," he said. "Trust certainly has been an issue, but we think we're getting a lot better."