How Facebook and Google served up ads for racists and anti-Semites

It's hard to overstate how dramatically the internet -- especially search engines like Google and social media like Facebook -- have overhauled the process of buying advertisements to promote products and services. The business of buying and selling ad space has gone from a highly manual process that involves sales reps, rate cards and price negotiations between marketers and publishers to one that's largely self-serve and automated to within microseconds.

A stark spotlight was cast on this little-discussed aspect of the online age when the investigative news website ProPublica revealed a fetid world where it's possible to target ads to seemingly any demographic -- even Nazis and self-described "Jew haters" -- on a global platform like Facebook that otherwise seems to value inclusiveness in its terms of service.

The problem is that in most online ad sales (whether it's through Facebook (FB), Google (GGLE), Twitter (TWTR) or elsewhere), humans are generally not in the loop. Facebook and Google, for example, rely on a simple form, and anyone can place ads with just a few clicks.

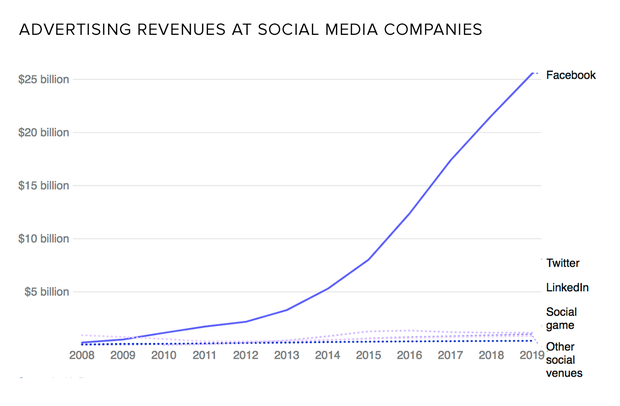

One reason for this is scale. A platform like Facebook is different than a traditional magazine or even a typical large website that might generate a few million unique visitors per day. Facebook runs a vast number of ads each day -- generating some $9.3 billion in revenue in the second quarter alone -- making it difficult to vet each and every online sales pitch to its 2 billion users.

But there's also a philosophical component: Facebook has long contended that it is not a media company and therefore has no obligation to monitor or censor its content. (In recent months, Facebook has finally begun to walk back from that position. Growing political pressure from Washington may have something to do with that shift.)

So what are the actual mechanics of purchasing an ad on Facebook and other major sites? It's pretty straightforward: Facebook Ad Manager is a self-serve tool in which you customize the specific demographic your ads will reach. You can specify geographic location, which can be as precise as certain cities, or as broad as the entire world. You can also exclude regions -- so your ad can run throughout Texas, but not in, say, Austin. You can choose the age and gender you want to reach, of course, as well as speakers of designated languages.

Facebook's Detailed Targeting options are where things get interesting -- and where the social media service ran into trouble with its anti-Jewish-themed advertising.

Detailed Targeting lets you include people with expressed interests in certain kinds of content, behaviors and opinions. This is where Facebook's infamous obsession with data collection comes in handy -- everything you like, share and post on Facebook helps define a profile about you. Advertisers can simply search or browse for whatever attributes they want to target -- dog lovers, car enthusiasts, Democrats, Republicans, and thousands of other audience targets are a few clicks away in the Ad Manager, which makes recommendations based on what terms are typed.

In the case of the Propublica story's "Jew Hater" example of targeting, a large enough body of users had entered offensive details in their Employee and Education profiles, such as listing their occupation as "Jew Hater," that this surfaced as a selection in the database.

It's important to realize that these demographic options are created algorithmically -- Facebook automatically compiles user interests and adds it to the database with little human interaction, and the depth, breadth and variety of user interests is vast.

ProPublica, for example, was able to create ads that targeted people who expressed anti-Jewish sentiments. Facebook said it has since scrubbed the system of those particular options -- try to replicate that same ad today, and you'll find there's no option to add Ku Klux Klan fans to your demographic choices. But it's still easily possible to create ads that target "9/11 Truthers," Flat Earth believers and people who seem to think our world will soon be destroyed by the rogue planet Nibiru. None of these are explicitly as harmful as white supremacists, but it could still make you uncomfortable that it's so easy to target ads to people who live on the fringe of rational society.

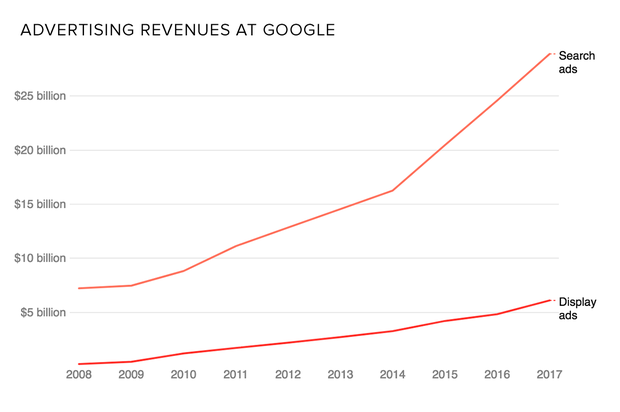

Facebook is hardly the only online platform that has enabled such malicious advertising; Google's AdWords service, for example, has a similar capability, though the difference here is that advertisers can choose keywords and phrases that are likely to turn up in search results. That, not surprisingly, can result in an even broader range of possible options.

Google is now trying to reduce the number of possible offensive ads by restricting the kinds of suggestions the tool makes when you search for keywords. "This violates our policies against derogatory speech and we have removed it," a Google spokesperson told the website BuzzFeed News after it revealed the problematic keywords. The company later added, "Our goal is to prevent our keyword suggestions tool from making offensive suggestions, and to stop any offensive ads appearing."

Facebook is taking similar steps. Last Friday, the social network announced: "Our community standards strictly prohibit attacking people based on their protected characteristics, including religion, and we prohibit advertisers from discriminating against people based on religion and other attributes." To that end, Facebook is disabling the ability to target ads based on information that's self-reported in categories that include education and employer.

The potential for offensive and disruptive ads has been simmering for years, but online platforms simply can't ignore the recent barrage of bad press that's resulted from incidents like the Russian-based political ads during the 2016 presidential election and more recent offensive keyword targeting. While it's not clear exactly what the outcome will be, it's a fair bet that it will be some combination of smarter keyword filtering and human checks and balances in the ad buying and reviewing process.