Wrongful arrest exposes racial bias in facial recognition technology

REVERB is a documentary series from CBS Reports. Watch the latest episode, "A City Under Surveillance," in the video player above.

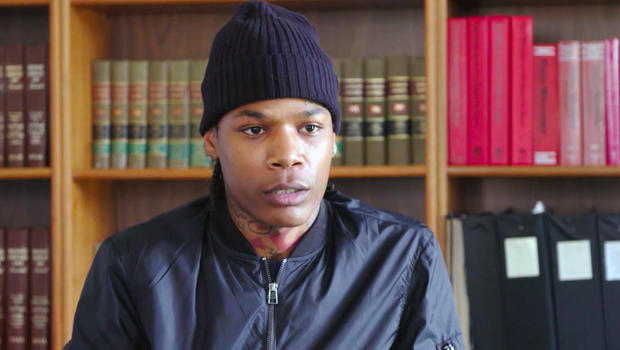

In July of 2019, Michael Oliver, 26, was on his way to work in Ferndale, Michigan, when a cop car pulled him over. The officer informed him that there was a felony warrant out for his arrest.

"I thought he was joking because he was laughing," recalled Oliver. "But as soon as he took me out of my car and cuffed me, I knew this wasn't a joke." Shortly thereafter, Oliver was transferred to Detroit police custody and charged with larceny.

Months later, at a pre-trial hearing, he would finally see the evidence against him — a single screen-grab from a video of the incident, taken on the accuser's cellphone. Oliver, who has an oval-shaped face and a number of tattoos, shared few physical characteristics with the person in the photo.

"He looked nothing like me," Oliver said. "He didn't even have tattoos." The judge agreed, and the case was promptly dismissed.

It was nearly a year later that Oliver learned his wrongful arrest was based on an erroneous match using controversial facial recognition technology. Police took a still image from a video of the incident and ran it through a software program manufactured by a company called DataWorks Plus. The software measures various points on a person's face — the space between their eyes, the slope of their nose — to generate a unique "face print." The Detroit Police Department then checks for a possible match in a database of photos; the system can access thousands of mugshots as well as the Michigan state database of driver's license photos.

Oliver's face came up as a match, but it wasn't him, and there was plenty of evidence to prove it wasn't.

Facial recognition technology has become commonplace in our society. It's used to unlock our cellphones and to enhance airport security. But many view it as flawed technology that has the potential to cause serious harm. It misidentifies Black and Brown faces at rates substantially higher than their White counterparts, in some cases nearly 100% of the time. And that's especially relevant in a city like Detroit, where nearly 80% of residents are Black.

"I think the perception that data is neutral has gotten us into a lot of trouble," said Tawana Petty, a digital activist who works with the Detroit Equity Action Lab. "The algorithms are programmed by White men using data gathered from racist policies. It's replicating racial bias that starts with humans and then is programmed into technology."

Petty points out that more than a dozen other cities, including San Francisco and Boston, have banned such technology because of concerns about civil liberties and privacy. She has advocated for a similar ban in Detroit. Instead, the city voted in October to renew the contract with DataWorks.

Even supporters acknowledge that the system has a high error rate. Detroit Police Chief James Craig said in June, "If we were just to use the technology by itself, to identify someone" — which he noted would be against department policy — "I would say 96% of the time it would misidentify."

Oliver's case follows the wrongful arrest of Robert Williams, 42, who was also arrested in 2019 for a crime he did not commit, based on the same algorithmic software.

Petty believes that with this technology the human costs far outweigh the benefits. "Yes, innovation is inevitable," she said. "But this wouldn't be the first time we've pulled back on something that we realize wasn't good for the greater of humanity."

Oliver still hasn't recovered from the fallout of his wrongful arrest. "I missed work for trial dates. Then I lost my job." Without an income, he couldn't make rent on his home or car payments, and soon he lost those too. A year later he is effectively homeless, couch-surfing with friends and family, and desperately seeking a new job.

In July, Oliver filed a lawsuit against the city of Detroit for at least $12 million. The lawsuit accuses Detroit Police of using "failed facial recognition technology knowing the science of facial recognition has a substantial error rate among black and brown persons of ethnicity which would lead to the wrongful arrest and incarceration of persons in that ethnic demographic."

The police department has acknowledged that the lead investigator on the case didn't perform the due diligence they should have before making the arrest.

"We sent the image to the detective. But then from there, the detective has to go out and actually look at the photo and compare it to any other information," said Andrew Rutebuka, head of the Crime Intelligence Unit that uses the technology. Investigators are trained to follow the facts, as they would in any other case, such as confirming the whereabouts of the person at the time the crime happened, or matching any other records. "The software just provides the lead," said Rutebuka. "The detective has to do their position of the work and actually follow up."

Since Oliver's arrest, the Detroit Police Department has revised its policy on using facial recognition software. Now it can only be used in the instance of a violent crime. Detroit has the fourth highest murder rate in the nation and the department maintains that the program is helping to solve cases that would otherwise go cold.

Some local families support the technology. Marsheda Holloway credits the system with helping unravel the murder of her 29-year-old cousin Denzel by retracing where he went and who he was with before he was killed.

"I never want another family to go through what me and my family went through," she said. "If facial recognition can help, we all need it. Everybody needs something."

But Michael Oliver isn't sure the software is worth it. "I used to be able to take care of my family," he said. "I want my old life back."

Advocates believe there are dozens of similar cases of wrongful arrests, but they are difficult to identify because the police department isn't required to share when matches were made using facial recognition software.

"Hopefully I win my case," Oliver said. "I don't want anyone else to go through this."