Teaching computers to learn like humans

Computers have advanced by leaps and bounds in recent years. Yet even the best artificial intelligence systems have been lacking in humans' unique ability to learn new concepts. For example, people need just one or a handful of examples to learn a concept as basic as "a horse." Humans can also make generalizations about the object. Computers, however, require hundreds or even thousands of examples of a horse to be able to grasp that same idea.

Brenden Lake, Moore-Sloan Data Science Fellow at New York University, wanted to close the gap in knowledge between humans and computers. Lake and colleagues from the Massachusetts Institute of Technology and the University of Toronto created a computer model that utilizes humans' unique ability to learn new concepts from a single example. Their research is published in the journal Science.

"We wanted to develop new, more human-like algorithms," Lake told CBS News. "And the best example of intelligence that we have is human."

The team started with nearly 1,600 different types of handwritten characters from alphabets around the world. They also collected information on how different people draw the characters in terms of pen strokes used and their order. The data was then used to build a model that could "learn" the listed visual symbols and make generalizations about them from very few examples.

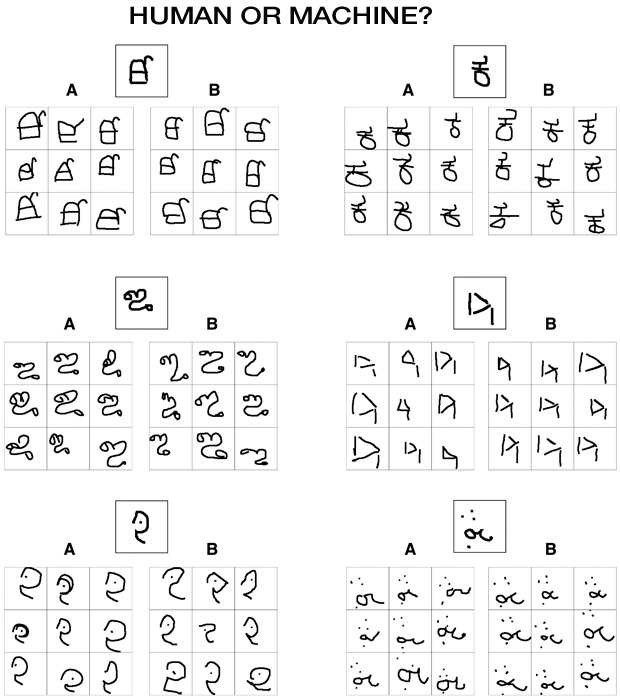

The model, called Bayesian program learning (BPL), was then tested against people and other computational approaches on a set of five concept learning tasks. When tasked with producing new examples of a novel character, BPL fared just as well as humans and outperformed other computer learning approaches.

"Their model classifies, parses, and recreates handwritten characters, and can generate new letters of the alphabet that look 'right' as judged by Turing-like tests of the model's output in comparison to what real humans produce," a press release stated. The Turing test is a test of whether a computer has reached a level of intelligence equalling that of humans.

So far the algorithm only works for handwritten characters. Lake says it could be broadened to have new applications for other symbol-based systems such as hand gestures, speech-recognition or dance moves.

"We're getting computers to infer things about what they already know by studying how people learn," Salakhutdinov, another author on the study, told CBS News.