Facebook auto-generates celebratory videos with extremist images

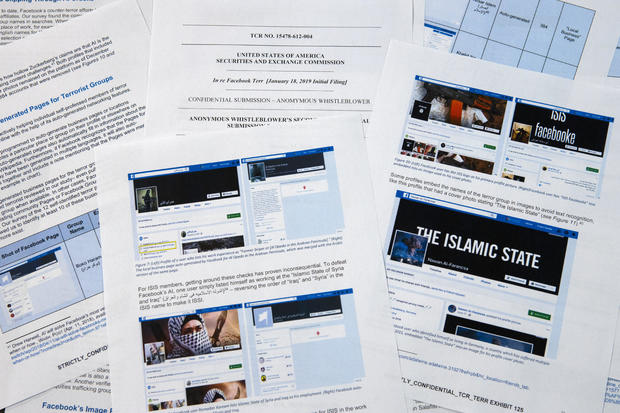

- A whistleblower complaint shows Facebook inadvertently uses propaganda by militant groups to auto-generate videos and pages that they can use for networking.

- Much of the content Facebook has banned slips through its algorithms and remains easy to find.

- One extremist's profile included auto-generated video with flag-burning and a video of al-Qaida leader Ayman al-Zawahiri urging jihadi groups not to fight among themselves.

- The complaint comes as Facebook tries to stay ahead of growing criticism over its ability to keep hate speech, live-streamed murders and suicides off its service.

Washington - The animated video begins with a photo of the black flags of jihad. Seconds later, it flashes highlights of a year of social media posts: plaques of anti-Semitic verses, talk of retribution and a photo of two men carrying more jihadi flags while they burn the stars and stripes.

It wasn't produced by extremists; it was created by Facebook. In a clever bit of self-promotion, the social media giant takes a year of a user's content and auto-generates a celebratory video. In this case, the user called himself "Abdel-Rahim Moussa, the Caliphate."

"Thanks for being here, from Facebook," the video concludes in a cartoon bubble before flashing the company's famous "thumbs up."

Facebook likes to give the impression that it's staying ahead of extremists by taking down their posts, often before users even see them. But a confidential whistleblower's complaint to the Securities and Exchange Commission obtained by The Associated Press alleges the social media company has exaggerated its success. Even worse, it shows that the company is inadvertently making use of propaganda by militant groups to auto-generate videos and pages that could be used for networking by extremists.

According to the complaint, over a five-month period last year, researchers monitored pages by users who affiliated themselves with groups the U.S. State Department has designated as terrorist organizations. In that period, 38 percent of the posts with prominent symbols of extremist groups were removed. In its own review, the AP found that as of this month, much of the banned content cited in the study -- an execution video, images of severed heads, propaganda honoring martyred militants -- slipped through the algorithmic web and remained easy to find on Facebook.

The complaint is landing as Facebook tries to stay ahead of a growing array of criticism over its privacy practices and its ability to keep hate speech, live-streamed murders and suicides off its service. In the face of criticism, CEO Mark Zuckerberg has spoken of his pride in the company's ability to weed out violent posts automatically through artificial intelligence. During an earnings call last month, for instance, he repeated a carefully worded formulation that Facebook has been employing.

"In areas like terrorism, for al-Qaida and ISIS-related content, now 99 percent of the content that we take down in the category our systems flag proactively before anyone sees it," he said. Then he added: "That's what really good looks like." Zuckerberg did not offer an estimate of how much of total prohibited material is being removed.

Glaring flaws

The research behind the SEC complaint is aimed at spotlighting glaring flaws in the company's approach. Last year, researchers began monitoring users who explicitly identified themselves as members of extremist groups. It wasn't hard to document. Some of these people even list the extremist groups as their employers.

One profile heralded by the black flag of an al-Qaida affiliated group listed his employer, perhaps facetiously, as Facebook. The profile that included the auto-generated video with the flag-burning also had a video of al-Qaida leader Ayman al-Zawahiri urging jihadi groups not to fight among themselves.

While the study is far from comprehensive -- in part because Facebook rarely makes much of its data publicly available -- researchers involved in the project said the ease of identifying these profiles using a basic keyword search and the fact that so few of them have been removed suggest that Facebook's claims that its systems catch most extremist content are not accurate.

"I mean, that's just stretching the imagination to beyond incredulity," said Amr Al Azm, one of the researchers involved in the project. "If a small group of researchers can find hundreds of pages of content by simple searches, why can't a giant company with all its resources do it?" Al Azm, a professor of history and anthropology at Shawnee State University in Ohio, has also directed a group in Syria documenting the looting and smuggling of antiquities.

A video auto-generated by Facebook based on one user's posts contains images of a burning U.S. flag, anti-Semitic messages and a call for "retribution."

Facebook concedes that its systems are not perfect but said it's making improvements. "After making heavy investments, we are detecting and removing terrorism content at a far higher success rate than even two years ago," the company said in a statement. "We don't claim to find everything and we remain vigilant in our efforts against terrorist groups around the world."

But as a stark indication of how easily users can evade Facebook, one page from a user called "Nawan al-Farancsa" has a header whose white lettering against a black background says in English "The Islamic State." The banner is punctuated with a photo of an explosive mushroom cloud rising from a city.

The profile should have caught the attention of Facebook -- as well as counterintelligence agencies. It was created in June 2018, lists the user as coming from Chechnya, once a militant hotspot. It says he lived in Heidelberg, Germany, and studied at a university in Indonesia. Some of the user's friends also posted militant content.

Evasive manuvers

The page, still up in recent days, apparently escaped Facebook's systems. That's because of an obvious and long-running evasion of moderation that Facebook should be adept at recognizing: The letters were not searchable text but embedded in a graphic block. But the company said its technology scans audio, video and text -- including when it's embedded -- for images that reflect violence, weapons or logos of prohibited groups.

The social networking giant has endured a rough two years beginning in 2016, when Russia's use of social media to meddle with the U.S. presidential elections came into focus. Zuckerberg initially downplayed the role Facebook played in the influence operation by Russian intelligence, but the company later apologized.

Facebook said it now employs 30,000 people who work on its safety and security practices, reviewing potentially harmful material and anything else that might not belong on the site. Still, the company is putting a lot of its faith in artificial intelligence and its systems' ability to eventually weed out bad stuff without the help of humans. The new research suggests that goal is a long way away, and some critics allege that the company isn't making a sincere effort.

When the material isn't removed, it's treated the same as anything else posted by Facebook's 2.4 billion users -- celebrated in animated videos, linked and categorized and recommended by algorithms. But it's not just the algorithms that are to blame.

The researchers found that some extremists are using Facebook's "Frame Studio" to post militant propaganda. The tool lets people decorate their profile photos within graphic frames -- to support causes or celebrate birthdays, for instance. Facebook said those framed images must be approved by the company before they are posted.

Hany Farid, a digital forensics expert at the University of California, Berkeley, who advises the Counter-Extremism Project, a New York and London-based group focused on combatting extremist messaging, said Facebook's artificial intelligence system is failing. He said the company isn't motivated to tackle the problem because it would be expensive.

"The whole infrastructure is fundamentally flawed," he said. "And there's very little appetite to fix it because what Facebook and the other social media companies know is that once they start being responsible for material on their platforms, it opens up a whole can of worms."

A list of extremist sympathizers

Another Facebook auto-generation function gone awry scrapes employment information from user's pages to create business pages. The function is supposed to produce pages meant to help companies network, but in many cases they're serving as a branded landing space for extremist groups. The function allows Facebook users to like pages for extremist organizations, including al-Qaida, the Islamic State group and the Somali-based al-Shabab, effectively providing a list of sympathizers for recruiters.

At the top of an auto-generated page for al-Qaida in the Arabian Peninsula, the AP found a photo of the damaged hull of the USS Cole, which was bombed by al-Qaida in a 2000 attack off the coast of Yemen. It killed 17 U.S. Navy sailors. It's the defining image in AQAP's own propaganda. The page includes the Wikipedia entry for the group and had been liked by 277 people when last viewed this week.

As part of the investigation for the complaint, Al Azm's researchers in Syria looked closely at the profiles of 63 accounts that liked the auto-generated page for Hay'at Tahrir al-Sham, a group that merged from militant groups in Syria, including the al-Qaida affiliated al-Nusra Front. The researchers were able to confirm that 31 of the profiles matched real people in Syria. Some of them turned out to be the same individuals Al Azm's team was monitoring in a separate project to document the financing of militant groups through antiquities smuggling.

Symbols of supremacy and hatred

Facebook also faces a challenge with U.S. hate groups. In March, the company announced that it was expanding its prohibited content to also include white nationalist and white separatist content -- previously it only took action with white supremacist content. It said it has banned more than 200 white supremacist groups. But it's still easy to find symbols of supremacy and racial hatred.

The researchers in the SEC complaint identified over 30 auto-generated pages for white supremacist groups, whose content Facebook prohibits. They include "The American Nazi Party" and the "New Aryan Empire." A page created for the "Aryan Brotherhood Headquarters" marks the office on a map and asks whether users recommend it. One endorser posted a question: "How can a brother get in the house."

Even supremacists flagged by law enforcement are slipping through the net. Following a sweep of arrests beginning in October, federal prosecutors in Arkansas indicted dozens of members of a drug-trafficking ring linked to the New Aryan Empire. A legal document from February paints a brutal picture of the group, alleging murder, kidnapping and intimidation of witnesses that in one instance involved using a searing-hot knife to scar someone's face. It also alleges the group used Facebook to discuss New Aryan Empire business.

But many of the individuals named in the indictment have Facebook pages that were still up in recent days. They leave no doubt of the users' white supremacist affiliation, posting images of Hitler, swastikas and a numerical symbol of the New Aryan Empire slogan, "To The Dirt" -- the members' pledge to remain loyal to the end. One of the group's indicted leaders, Jeffrey Knox, listed his job as "stomp down Honky." Facebook then auto-generated a "stomp down Honky" business page.

Questions about exposure

Social media companies have broad protection in U.S. law from liability stemming from the content that users post on their sites. But Facebook's role in generating videos and pages from extremist content raises questions about exposure. Legal analysts contacted by the AP differed on whether the discovery could open the company up to lawsuits.

At a minimum, the research behind the SEC complaint illustrates the company's limited approach to combatting online extremism. The U.S. State Department lists dozens of groups as "designated foreign terrorist organizations," but Facebook in its public statements said it focuses its efforts on two, the Islamic State group and al-Qaida.

But even with those two targets, Facebook's algorithms often miss the names of affiliated groups. Al Azm said Facebook's method seems to be less effective with Arabic script.

For instance, a search in Arabic for "Al-Qaida in the Arabian Peninsula" turns up not only posts, but an auto-generated business page. One user listed his occupation as "Former Sniper" at "Al-Qaida in the Arabian Peninsula" written in Arabic. Another user evaded Facebook's cull by reversing the order of the countries in the Arabic for ISIS or "Islamic State of Iraq and Syria."

John Kostyack, a lawyer with the National Whistleblower Center in Washington who represents the anonymous plaintiff behind the complaint, said the goal is to make Facebook take a more robust approach to counteracting extremist propaganda.

"Right now we're hearing stories of what happened in New Zealand and Sri Lanka -- just heartbreaking massacres where the groups that came forward were clearly openly recruiting and networking on Facebook and other social media," he said. "That's not going to stop unless we develop a public policy to deal with it, unless we create some kind of sense of corporate social responsibility."

Farid, the digital forensics expert, said Facebook built its infrastructure without thinking through the dangers stemming from content and is now trying to retrofit solutions. "The policy of this platform has been: 'Move fast and break things.' I actually think that for once their motto was actually accurate," he said. "The strategy was grow, grow, grow, profit, profit, profit and then go back and try to deal with whatever problems there are."