The Pfizer-BioNTech vaccine is a top target of conspiracy theories

The Pfizer-BioNTech coronavirus vaccine became a target of conspiracy theories and disinformation campaigns as soon as it was announced, reaching millions of people on sites like Twitter, Reddit and 4chan, according to a recent analysis from a cyber defense firm.

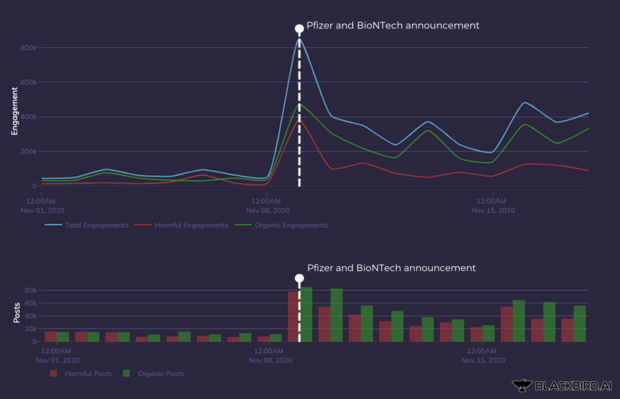

COVID-19 conspiracy narratives, like the false belief that the vaccine was delayed for political reasons, flourished on social networks in the fall and early winter, according to the New York tech security firm Blackbird. The firm created an algorithm to analyze posts in real-time by hunting for signals of what CEO Wasim Khaled calls "synthetic amplification," which indicate activity by botnets and anti-vaccination influencers.

These bogus notions about the vaccines, amplified by a relatively small number of fake accounts and real influencers, reached millions of people, Khaled said.

Botnets and inauthentic accounts — automated accounts not actively managed by humans — have behavioral signatures that are easy for AI to identify, but hard for social networks to eradicate. Companies like Facebook and Twitter use both machine-learning algorithms and human moderators to reduce the spread of conspiracies, but Khaled said botnets are effective because they're inexpensive and easy to deploy.

"Bots and influencers work in tandem," he explained. "We can't prove if they collude behind the scenes, but social media data shows clearly that they influence each other by sharing the same links, repeating the same phrases, tagging the same accounts and jumping in on trending hashtags."

For example, some botnets reach real influencers by spamming conspiracy links to trending hashtags. Another common tactic is to generate fake trends by synchronizing hundreds of posts using similar anti-vaccine and pseudoscientific claims.

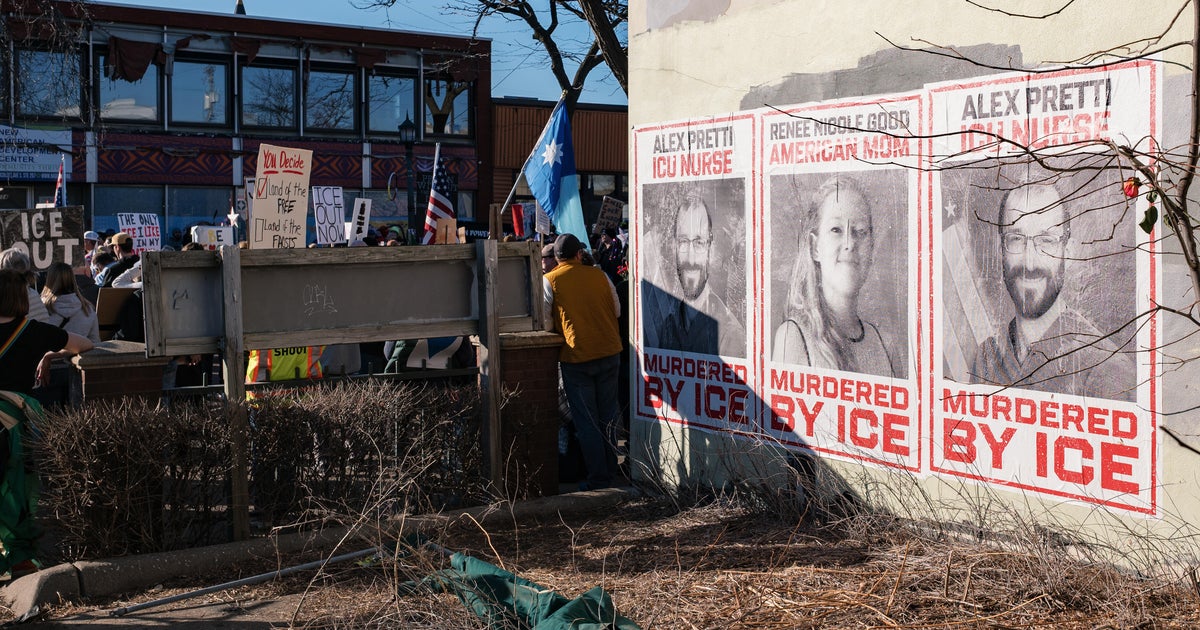

One common tactic is to co-opt trending topics by spamming content with provocative rhetoric that is intended to encourage engagement. This helps raise the visibility and reach of a piece of content, which increases the likelihood that a politically aligned influencer will further share the content. The content gains momentum by muddying the waters between facts and falsehoods. For example, themes that connect health restrictions like stay-at-home orders and mask-wearing with an assault on "freedom" and political "rights" prospered with bots and influencers for the duration of the pandemic.

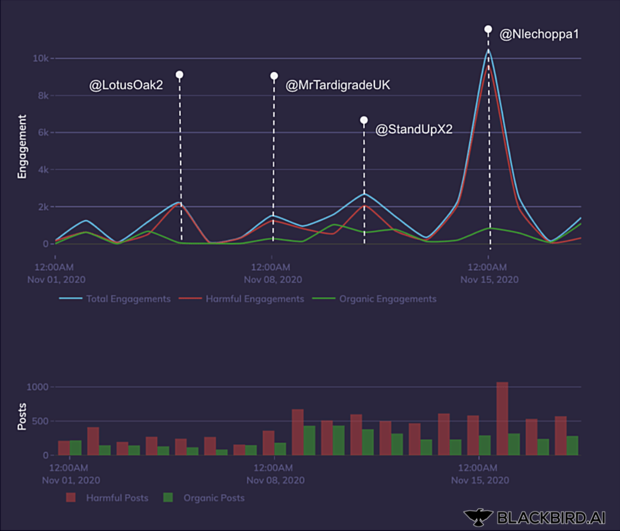

Many online influencer accounts are famous and have millions of followers, but smaller accounts can carry a lot of clout as well. Khaled said that many of the conspiracies peddled by influencers use "anti-vax rhetoric and pseudoscientific messaging" to undermine people's confidence, such as the false notion mRNA vaccines alter human DNA, an idea that proliferated widely across Twitter when the Pfizer vaccine was announced last year.

The Twitter accounts @LotusOak2, @BrianGPowell, and @DVaugha49207961 reached millions of people by connecting COVID-19 vaccine conspiracies to existing anti-vaccination disinformation networks. These accounts pushed false narratives that the COVID-19 vaccine causes infertility by attaching them to the hashtags #InformedConscent, #sterilization, #BigPharma, #Genocide and #ExposeBillGates.

"When an influencer likes or shares a post, even if it's a low-quality bot post, algorithms pick up that signal and spread the fake content far and wide. This means that partisan and divisive content has an advantage. The sheer volume of synthetic activity on social media is staggering," Khaled said. The posts the company identified were political in nature and sought "to exploit growing partisan divides within American society."

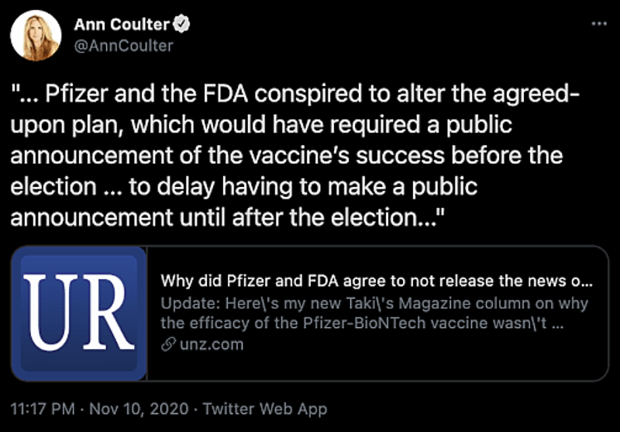

Pfizer's vaccine, announced just two days after the contentious November 7 election, uncorked a torrent of political and health conspiracies. One prominent theory discovered by the AI falsely alleged that pharmaceutical companies deliberately delayed the vaccine's announcement to harm former President Donald Trump. Another claims falsely that the COVID-19 vaccine is part of a population control scheme.

The AI technology found that some of the top hashtags used by bots and influencers to spread conspiracies were #StopTheSteal, #VaccineGate, #MAGA, #BigPhrama and #SleepyJoe. The tags #StopTheSteal and #MAGA2020LandslideVictory were particularly effective at connecting the Pfizer-election theft conspiracy with broad conspiracies about election fraud.

The QAnon conspiracy theory group used social media influencers and bots to promote the conspiracy theory video "Plandemic," in which discredited scientist Judy Mikovits argues against vaccines and public-safety measures like wearing masks. QAnon also attached vaccine conspiracy theories to the New World Order population control conspiracy and to the Great Reset, a post-pandemic policy proposal drafted by the World Economic Forum.

Khaled declined to attribute bot activity to a specific actor, saying that "social media is a dynamic and malleable battle space." Sophisticated botting tools are available for purchase at low cost, and hacking software is widely available for free on the dark web.

Disinformation spreaders are generally motivated by money or political influence. Some are large, organized networks. But many more are small or independent operators. In the U.S., accounts deliberately or inadvertently spreading COVID-19 conspiracies belong to spammers and for-hire cybercriminals, clout-chasing influencers, politicians and political entities, and agenda-driven grassroots organizations, according to Khaled.

Such parties uses conspiracies "to frame the world in terms of powerful and sinister hidden forces," Khaled said. "Our AI found that fear is a useful motivator."