Facebook restores disputed posts after its Oversight Board issues first decisions

In its first set of decisions, Facebook's Oversight Board has overturned most of the company's actions on user posts previously removed from the platform for violating the social network's standards. In 4 of the 5 cases announced Thursday — which dealt with ethnic hate speech, nudity and COVID-19 misinformation — the board decided to restore the posts.

The board's decisions are binding and Facebook will have seven days to restore content according to the board's verdicts. The company has 30 days to respond to any of the board's policy recommendations.

Facebook established the independent board, sometimes referred to as the company's "Supreme Court," in 2019 in an effort to make its content moderation policies more transparent. In addition to issuing a set of verdicts, the Oversight Board also gave Facebook nine policy recommendations and revealed that more than 150,000 cases have been appealed by users.

The board's first rulings did not include a highly anticipated verdict on Facebook's decision to suspend President Donald Trump's account after the January 6 attack on the Capitol, which Facebook referred to the board last week. The board said it will open Trump's case to public comments tomorrow.

The first set of verdicts provided insight into the decision process of the 20-member board, which is made up of legal experts, journalists and human rights advocates. The published decisions included frequent references to international human rights standards on free speech and suggested that board members favor free expression except in cases that could cause harm.

The five cases — which Facebook will use as precedents to decide on similar cases — included a decision to remove a post that pejoratively implied Muslims were inferior, a breast cancer awareness post that depicted female nipples, a post that allegedly quoted a German Nazi leader and a post that falsely claimed a cure for COVID-19 exists.

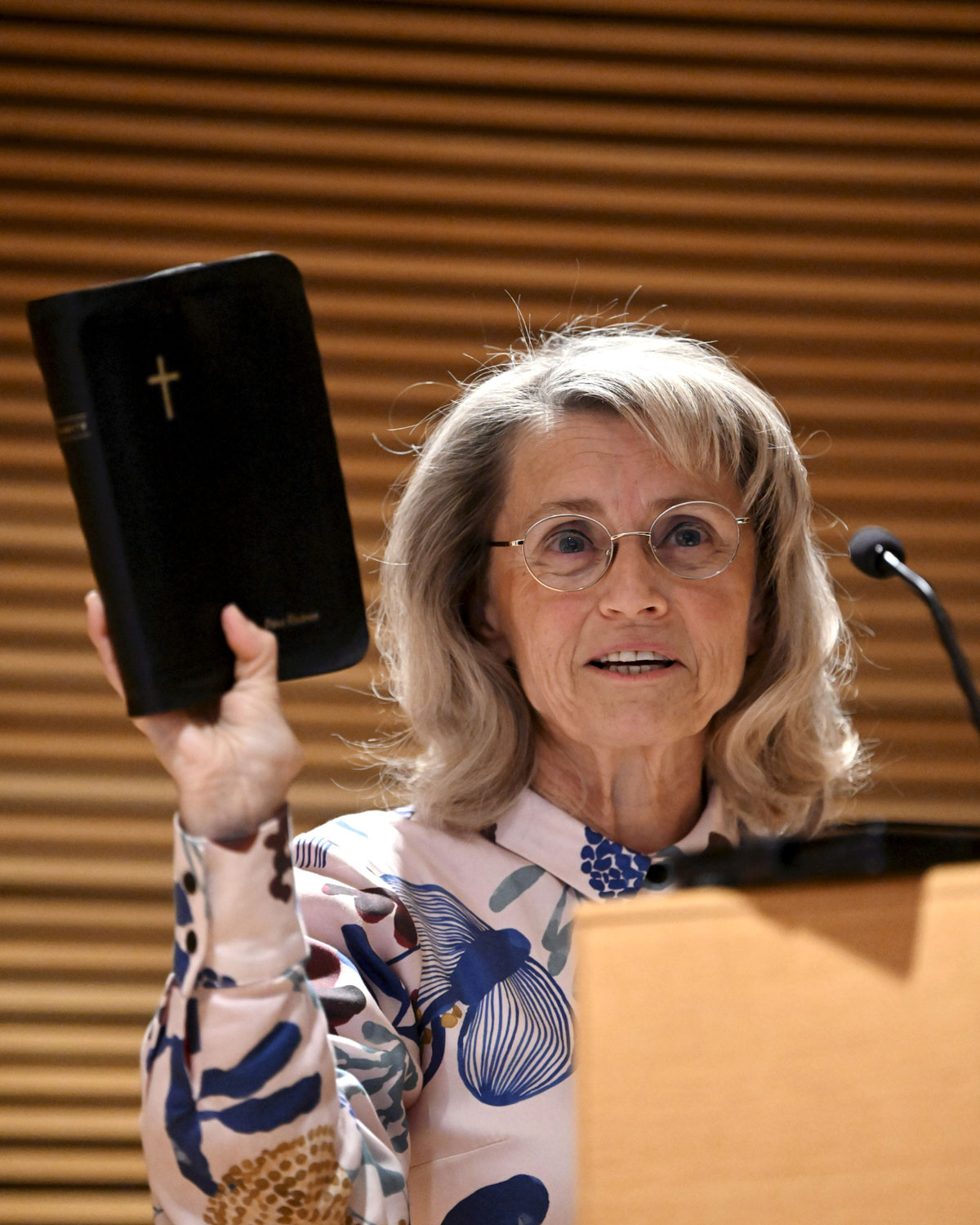

Facebook's Vice President of Content Policy Monika Bickert said the company will "take to heart" the board's suggestions. "Their recommendations will have a lasting impact on how we structure our policies," she said.

The board upheld just one of Facebook's decisions, which removed a Russian-language post that used an ethnic slur against Azerbaijanis.

The board's first ruling involved a user in Myanmar who posted in Burmese, questioning the lack of response by Muslims to the treatment of Uyghur Muslims in China. The post implied that there was something wrong with Muslim men. After Facebook removed the post, the Oversight Board determined Facebook's initial translation may have been inaccurate and ruled that while the statement was pejorative toward Muslims, it did not rise to the level of hate speech.

The board upheld Facebook's decision to remove a post that used Russian language wordplay in its second ruling, which involved what Facebook said could be understood as an ethnic slur. After commissioning an independent linguistic analysis, the Oversight Board determined that the word was in fact a dehumanizing label for Azerbaijani people.

The board wrote, "In light of the dehumanizing nature of the slur and the danger that such slurs can escalate into physical violence, Facebook was permitted in this instance to prioritize people's 'Safety' and 'Dignity' over the user's 'Voice.'"

A third ruling restored an Instagram post removed by an automated system for violating the company's standards on nudity. The post by a user in Brazil aimed to raise awareness of breast cancer symptoms and included photos of female breasts and nipples that showed signs of cancer. The Oversight Board wrote that after it selected this case, Facebook restored the post and called the removal a technical error, then asked the board not to hear this case.

The board disagreed, writing that the case was important. "The incorrect removal of this post indicates the lack of proper human oversight which raises human rights concerns," the board wrote. The organization urged Facebook to change its policies to notify users when their content is moderated by automated systems, and to allow users to appeal certain moderation decisions to humans.

The board elected to restore a post that incorrectly attributed a quote to Nazi Germany leader Joseph Goebbels in its fourth decision. The user shared the quote without context, but later told the board that the intent was to condemn Goebbels and draw a comparison between the sentiment in the quote and Trump's presidency.

Facebook's policy is to treat quotes attributed to dangerous individuals as expressions of support, unless the user adds context to suggest they condemn that individual. The board determined, however, that those policies were not clearly outlined to the public, and this user was not told which policy their post violated. The board advised Facebook to better notify users of its rules, and to more clearly designate which organizations and individuals can be considered "dangerous."

The board's final decision restored a post that criticized France's health strategy and falsely claimed that a cure for COVID-19 exists. The post criticized a French regulatory agency for refusing to authorize hydroxychloroquine for use against COVID-19 — a drug touted by some, including Mr. Trump, but not proven to prevent COVID-19. The board determined that while the post made false claims about a COVID cure, it should be restored because it did not pose imminent harm, a crucial element of Facebook's policies on misinformation.

The board also said that Facebook's misinformation rules were inconsistent and unclear. "A patchwork of policies found on different parts of Facebook's website make it difficult for users to understand what content is prohibited," the board wrote. "Changes to Facebook's COVID-19 policies announced in the company's Newsroom have not always been reflected in its Community Standards, while some of these changes even appear to contradict them."

Bickert said content from three of the cases had already been restored, and content from the fourth case was restored last year.