Facebook whistleblower testifies platform has "not earned the right to just have blind trust in them"

Frances Haugen, the Facebook whistleblower who first came forward in an explosive "60 Minutes" interview, told a Senate subcommittee on Tuesday that there is "no one" holding Mark Zuckerberg accountable except for himself.

"Facebook has not earned a right to just have blind trust in them," Haugen said. "Trust is ... last week one of the most beautiful things I heard on the committee was trust is earned. And Facebook has not earned our trust."

Haugen said the company suffered from "moral bankruptcy" and is "stuck in a loop it can't get out of."

Haugen worked as a product manager for the civic misinformation team at Facebook for nearly two years before she quit in May. Before leaving, she said she secretly copied tens of thousands of pages of Facebook internal research, which she said provides evidence the company has been lying to the public about making significant progress against hate, violence and misinformation.

Haugen gave many of the documents to The Wall Street Journal, which published reporting on the research that showed the company was aware of the harm it does to underage users. She also shared the internal research with Senator Richard Blumenthal, Democrat of Connecticut, and Senator Marsha Blackburn, Republican of Tennessee, who are both on the Senate subcommittee on Consumer Protection, Product Safety and Data Security. Haugen also filed a whistleblower complaint with the Securities and Exchange Commission.

While the hearing touched on a wide swath of Facebook's problems, it focused on the platform's impact on children, coming after the company paused its planned "Instagram for Kids" and a week after its global safety head defended children's use of the platform. Haugen compared the platform to cigarettes, and several lawmakers said Facebook needed to be treated like Big Tobacco.

"Facebook understands that if they want to continue to grow, they have to find new users," Haugen said. "They have to make sure that that next generation is just as engaged on Instagram as the current one. And the way they'll do that is by making sure that children establish habits before they have good self-regulation."

Facebook went on the offense while Haugen testified, with spokespeople tweeting that she didn't work on "child safety or Instagram or research these issues and has no direct knowledge of the topic from her work at Facebook." The company also issued a statement after her testimony trying to discredit her.

Zuckerberg says idea that Facebook prioritizes profit above safety is "just not true"

Zuckerberg on Tuesday evening shared a letter he sent to Facebook employees following Haugen's testimony. In it, he said that the "idea that we prioritize profit over safety and well-being" is "just not true."

"The argument that we deliberately push content that makes people angry for profit is deeply illogical," Zuckerberg wrote. "We make money from ads, and advertisers consistently tell us they don't want their ads next to harmful or angry content. And I don't know any tech company that sets out to build products that make people angry or depressed. The moral, business and product incentives all point in the opposite direction."

Zuckerberg also defended Facebook's recent attempts to tailor certain products specifically for underage users, saying that he "found it difficult" to read what he called "the mischaracterization of the research into how Instagram affects young people."

Facebook says Haugen mischaracterized the company's work

Facebook said Tuesday that Haugen mischaracterized the company's work and stole the documents she turned into lawmakers.

"If one teen on Instagram is having a bad experience, we need to do better. That's why we do the research. That's why we've built new features and tools along the way, like hiding the like count on Instagram or giving people the ability to stop people who might bully or harass them," Monica Bickert, VP of content policy at Facebook, said in an interview with CBS News congressional correspondent Kris Van Cleave.

Bickert argued that Facebook's internal research showed that for a majority of teens struggling with mental health and well-being issues, Instagram makes it either better or doesn't have material impact. However, the same research - brought to light because of Haugen – also found that for a third of teenage girls, Instagram makes body image issues worse.

"We are doing the research exactly because we care about safety," Bickert said.

When asked about changes to the company's use of algorithms that determine what content users see on their feeds, Bickert said "people can always turn it off" and added that the company welcomes a conversation with lawmakers about changes to algorithmic rankings.

"We think that regulation about content on social media platforms is something that could be really beneficial for the public," Bickert said. "The government should have a voice here. We would like to be a part of the conversation," she added.

In a separate written statement, Lena Pietsch, Facebook's director of policy communication, said that Haugen did not work on child safety issues during her time at the company and claimed she never attended decision-point meetings with top level executives.

Pietsch said Haugen, a former product manager at Facebook, only worked at the company for less than two years. But the documents she provided to lawmakers support the allegations she is making.

"We don't agree with her characterization of the many issues she testified about," Pietsch said, adding that it's time for regulators to create a new set of rules for the internet. "Instead of expecting the industry to make societal decisions that belong to legislators, it is time for Congress to act," she said.

Haugen says she opposes breaking up Facebook

Despite some lawmakers' calls to split up Facebook, and the Federal Trade Commission's pursuit of an antitrust case that could force the company to break up, Haugen said she doesn't support breaking up the platform. In her view, a breakup wouldn't solve the issues of algorithms making bad decisions on the platforms, but would simply shift most of the problems to Instagram, she testified.

"If you split Facebook and Instagram apart, it's likely most of the advertising dollars go to Instagram, while Facebook continues to be this Frankenstein," with not enough resources to address problematic content on the platform, she said. The "systems will still exist," she added.

Instagram is the fastest-growing of Facebook's properties. Its revenue, currently estimated around $40 billion, is also growing at about 40% year, Bloomberg Intelligence analyst Mandeep Singh previously told CBS News. That's three times as fast as Facebook proper, and significantly more than digital advertising as a whole, which is growing at about 12% or 13% a year, Singh said.

"Have we seen a golden age of teen mental health over the past 10 years?" Haugen asks

Senator Cynthia Lummis asked Haugen what documents she would request from Facebook if she was a lawmaker. Haugen replied: "Any research on use, addictiveness of product, and what Facebook knows about parents' lack of knowledge of the platform."

Haugen said that in documents she read, parents were not aware of how "dangerous" Instagram is.

Lummis, a Republican from Wyoming, repeated that Facebook should be treated the same way as Big Tobacco.

Senator Dan Sullivan, Republican of Alaska, continued that line of questioning, asking whether in 20 years, Americans would look back and wonder "what the hell were we all thinking?"

Haugen also pushed back on Davis' assertions last week that Facebook and Instagram helped lonely teens stay connected.

"When Facebook has made statements in the past about kids who were once alone, [I was] surprised about that," Haugen said. "If Instagram is a force, have we seen a golden age of teen mental health over the last 10 years? No — broad research shows social media amplifies risk- Facebook's own research shows that. Kids are saying 'I am unhappy when I use Instagram, I can't stop, I'm afraid to be ostracized.'"

Haugen added that Facebook is "stuck in a loop that it can't get out of."

Congress likely to pass transparency laws: Bloomberg Intelligence

Despite Haugen's explosive allegations and a steady chorus of bipartisan condemnation from lawmakers, it's not certain that Tuesday's hearing will result in new regulations for Facebook.

The most likely outcome is that lawmakers will demand more access to Facebook's data, Bloomberg Intelligence analyst Matthew Schettenhelm said in a research note, calling it "the most legally defensible path for U.S. lawmakers."

Directly regulating the content Facebook allows is unlikely, because "social-media companies have free-speech rights that generally protect their ability to air and even amplify distasteful messages and misinformation," he wrote.

While such transparency could generate negative headlines for Facebook and other social media companies, it wouldn't "directly alter the companies' business models as direct limits on data use might," he wrote.

Haugen emphasized this point in her testimony under questioning from Senator Ted Cruz. "Until we have transparency, we will not have a system compatible with democracy" and speaking in favor of a "regulatory body" that could force Facebook to disclose information, she said. "Right now no one can force Facebook to release data," she said.

It's also possible that Congress will update a 1998 law limiting how companies can use children's data. Senator Ed Markey, one of the authors of the Children's Online Privacy Protection Rule, repeatedly said on Tuesday he wants to update it to apply to older teens, as well as to ban targeted ads to children and limit influencer content.

Facebook spokespeople criticize Haugen

As Haugen testified, Andy Stone, Facebook's policy communications director, went on the offense. He tweeted that Haugen "did not work on child safety or Instagram or research these issues and has no direct knowledge of the topic from her work at Facebook."

Despite criticism over the tweet, Stone repeated Haugen's own responses to lawmaker's questions, including a question from Senator Amy Klobuchar about teens being some of the platform's most profitable users, to which she replied that she didn't work on that.

Another Facebook spokesperson, Joe Osborne, tweeted that Facebook left in place some of the measures to safeguard against misinformation leading up to January 6 and that Haugen "didn't work on these efforts."

Haugen says "only Facebook knows how it personalizes your feed for you"

Haugen said "no one truly" understands the "destructive choices" made by Facebook except for Facebook. "Facebook's closed design means it has no real oversight," Haugen said. "Only Facebook knows how it personalizes your feed for you."

Haugen said that in the end, "the buck stops with Mark" Zuckerberg, and no one is holding him accountable but himself.

"Mark Zuckerberg ought to be looking at himself in the mirror today," she said. "And yet rather than taking responsibility and showing leadership, Mr. Zuckerberg is going sailing."

Blumenthal said Zuckerberg's new policy is "No apologies, No admissions. No acknowledgment."

Problematic ads approved

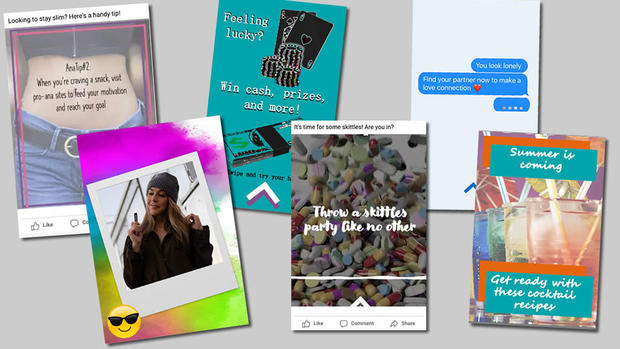

Doubling down on the idea that Facebook can harm young users, Senator Mike Lee, Republican of Utah, showed examples of three fake ads that Facebook approved, promoting anorexia and drug use.

One ad had the words "throw a Skittles party like no other" against an image of pills. Another contained advice on eating less, using common slang for anorexia. The words, against an image of a young woman's bare stomach, read: "AnaTip #2: When you're craving a snack, visit pro-ana sites to feed your motivation and reach your goal."

The ads, which never ran, were created up by the Tech Transparency Project to draw attention to what the group said were holes in Facebook's ad approval process. The TTP tried the experiment this spring and again in September; the ads were approved last month, the group said.

Responding to Lee's question on how those ads could be approved, Haugen theorized that algorithms could be to blame.

While Haugen noted she never worked on the company's ad approval team, she said, "Facebook has a deep focus on scale. Scale is, 'can we do things cheaply for many people. That's why they rely on AI. It's possible none of those ads were seen by a human."

Hate speech on the platform suffers from the same problem, she said. In a best-case scenario, Facebook catches 10 to 20% of hate speech, she said.

Whistleblower: 5% of teens "addicted"

Facebook whistleblower Frances Haugen told lawmakers Tuesday that the social media company's internal research shows at least 5% of teenagers on Instagram are addicted to the service and said it is likely that far more kids are hooked.

Haugen said Facebook is aware that its algorithms lead children from "very innocuous topics like healthy recipes" to "anorexia promoting content in a very short period of time."

"Many of Facebook's internal research reports indicate that Facebook has a serious negative harm on a significant portion of teenagers and younger children," Haugen said.

Haugen also noted that Facebook's CEO Mark Zuckerberg, who holds over 55% of voting shares in the company, is ultimately responsible for the decisions being made at the company.

"There is no one holding Mark [Zuckerberg] accountable but himself," Haugen said. "There is no unilateral responsibility, the metrics make the decisions," she added.

How to watch Facebook whistleblower Frances Haugen testify before Senate committee

What: Frances Haugen testifies before Senate subcommittee

Date: Tuesday, October 5, 2021

Time: 10 a.m. ET

Location: Russell Building – Washington, D.C.

Online stream: Live on CBSN in the player above and on your mobile or streaming device.